This Case Study is how one company, GradeCam, has run load tests with increasing load. It will discuss their challenges and setbacks, as well as their results and benefits. Thanks to Joseph Bonifacio, lead QA at GradeCam.

About GradeCam

GradeCam is a provider of easy and innovative assessment solutions that empower educators to minimize workloads and maximize learning. Whether it’s bridging the distance between in-class and online assignments, saving time scoring and transferring grades, or aggregating flexible and shareable data, GradeCam simplifies and streamlines tasks so teachers can focus on teaching. And perhaps best of all, it doesn’t require any proprietary forms, special equipment, or time-intensive training to get started.

Objective of Load Tests

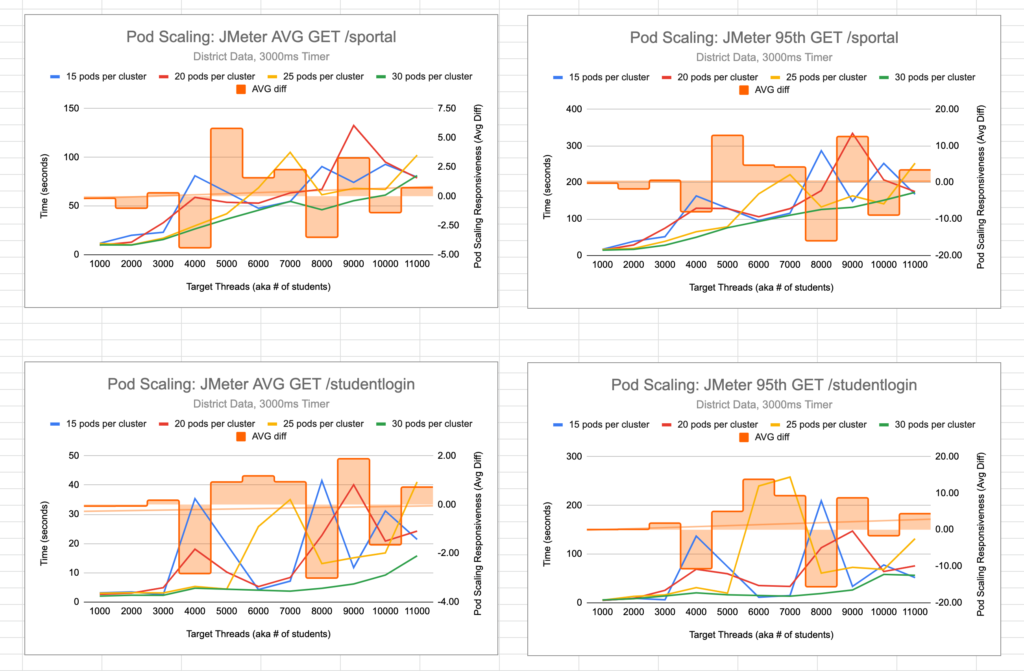

Scaling changes were proposed. One of the proposed changes was to see if scaling pods per cluster would be a simple, short-term change that could positively impact performance (if needed). Below shows two of the calls average and 95th percentiles over the increasing, stepped load tests. As GradeCam interpreted, very light evidence showed that they could consistently expect improved performance based on scaling architecture alone, at least with these two specific end-points.

GradeCam has a separate testing environment that closely resembles their production environment. They use the testing environment to test changes with code merges or DevOps modifications that either (1) improve performance or (2) have a risk of degrading performance. Before applying or merging changes, they use data-driven testing to compare performance before and after.

Unlike other systems that might experience ramp-up like a bell curve, student users are more likely to log in in short bursts and utilize the system with sustained activity. This results in a load profile more like “steps” up and then a gradual leveling off as users sign-off.

Steps taken to gradually run load tests with increasing load

GradeCam uses JMeter to generate load in their systems. They run separate tests with an increasing number of threads (in this case the amount of load is so high, they increase the number of AWS instances) to simulate the “stepped” bursts of activity.

This approach allows them to produce ”spikes” in their users’ behavior. Their test users behave like students taking an online test – and students normally begin a test within a short period of time. So by separating their load into separate tests, they can simulate the first ten or fifteen minutes of their workflow and increase the volume with each subsequent test.

Besides a more realistic workflow, the benefits of stepped load tests allows them to look for a ceiling and compare the different results across the different tests.

Challenges/Setbacks

A reoccurring challenge is test data management. With lighter loads, it is easier to generate test data on a JMeter setUp thread or even leave test data in the environment. However, as you increase the number of threads by thousands, this requires management of more and more realistic data.

In order to overcome this, they’ve created a test data framework that automated test data generation as needed. This required more up-front time and development but as a result, they’ve been able to execute performance tests on RedLine13 with relative ease between different test executions and test cases.

Results/Benefits

They’ve begun using the results collected by RedLine13 from their JMeter tests and their system metric’s platform to make data-driven decisions regarding their systems architecture and performance. Comparison testing allows them to apply new configurations with more confidence.

In addition, they’ve expanded their metric observation to track trends over time, allowing them to understand their systems performance over time and catch unexpected regressions that may have been initially downplayed as a risk for performance.

Free Trial

RedLine13 offers a free trial that includes all of our Premium features. Sign up now and starting increasing your load today!